We get a lot of requests about how you can deliver SAP CPI without the use of SAP CTS+ or Transport Management System. Both of the tools provide some challenges on how you can deliver the integration and understand what is being changed.

Getting started with a DevOps setup will be a long journey. We have developed the Figaf DevOps Tool as a way to give you all the options for delivering SAP CPI in a box with just one tool without needing to make a lot of code. It contains all the areas you need.

- Automatic Testing of iflows

- Versioning of objects

- Transports of individual objects with Approval

- Global configuration of objects

- Documentation of changes

- Git synchronization with all needed templates, so you don’t need to set up a lot of stuff.

It takes 1 hour to get started with the Figaf Tool, and it is recommended to try it before you go with the Azure option. It will anyway provide the Git repository that you will need to handle the process. You can see this 20 minute demonstration on how to install Figaf, Create Git repository and then create unit test cases for Groovy script to deliver the integration.

We do also get some requests on how you can deliver SAP CPI in a better way with Azure DevOps or other platforms. Since it is what customers are using with the release of other functionalities. We therefore decided to set up a PoC of how you could deliver SAP CPI with the use of our plugins. We do have some open source Gradle plugins that allow you to download, upload, deploy and test SAP CPI iflows. They were designed for working in your IDE to speed up the development. They come in handy for the project.

Azure DevOps is a platform providing really flexible and useful ways to continuously deliver your projects. It can help to maintain SAP CPI faster and make it a lot more stable.

You could also use the same type of flow from Github Actions, Bitbucket Pipelines, Jenkins or what you use as a CI/CD tool.

The process shows how you can release SAP CPI iflows with the tool, the plugins also support SAP CPI value mappings, and API management proxies. So they could be delivered with the same approach.

Video Demostration

Here you can see a video demonstration of how the tool works to manage a change.

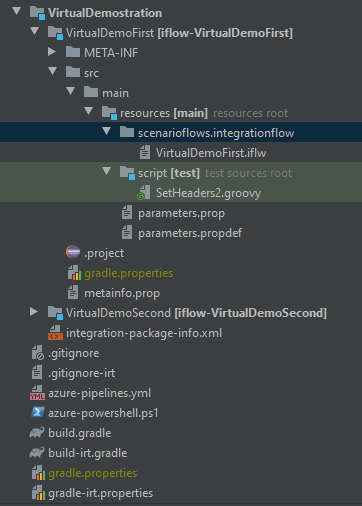

Repository

First of all you need to create a repository with IFlows on Azure DevOps. It’s also possible to connect to the existing Git repository instead of creating a new one. The repository structure can be different, but we recommend treating each IFlow as a separate gradle module. Figaf DevOps Tool is able to synchronize with SAP CPI and generate such structure automatically.

You can create the structure manually as specified by the Gradle plugins https://github.com/figaf/cpi-gradle-plugin

Note: We have changed the structure of the package in Figaf where we have added an IntegrationFlow folder, so you will need to update the scripts accordingly.

Pipelines

Azure Pipelines is a cloud service that you can use to automatically build and test your code project and make it available to other users. https://docs.microsoft.com/ru-ru/azure/devops/pipelines/get-started/what-is-azure-pipelines?view=azure-devops

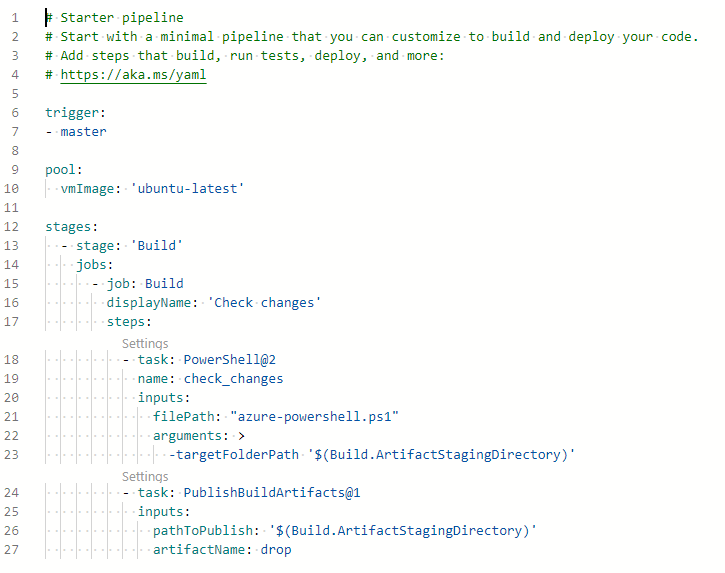

Technically Azure Pipeline is a YML file having different steps needed for your build.

Basically we need to answer two questions: when to build/test and what to build/test?

In our example the pipeline will be automatically triggered after a commit/pull request to the master branch.

One of the biggest challenges of SAP CPI specific is to define which IFlows you want to work with. You can have plenty of IFlows but it’s absolutely possible that you have changed/created only a couple of them for the current release. So it would be really inefficient to redeploy all the IFlows. Because of it we need to determine which IFlows have been changed. There are different ways to do it but in our example we rely on the git diff command which returns the difference between current and previous commits to master. This command is called from a powershell script but it can be also configured as a bash command or gradle task or whatever. There are also a couple of other possible approaches: define the list of the needed IFlows manually or use only the IFlows which modification date is later than previous build/commit date.

Also the pipeline can contain plenty of additional tasks, checks, tests. Azure DevOps provides a lot of tasks out of the box.

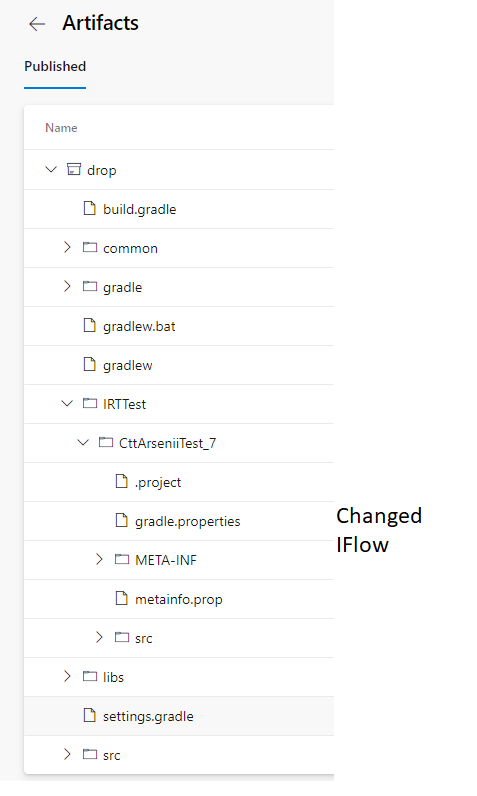

Anyway as a result of the build pipeline you are going to have a published artifact. In our case it’s a projection of the repository to the modified IFlows.

Release

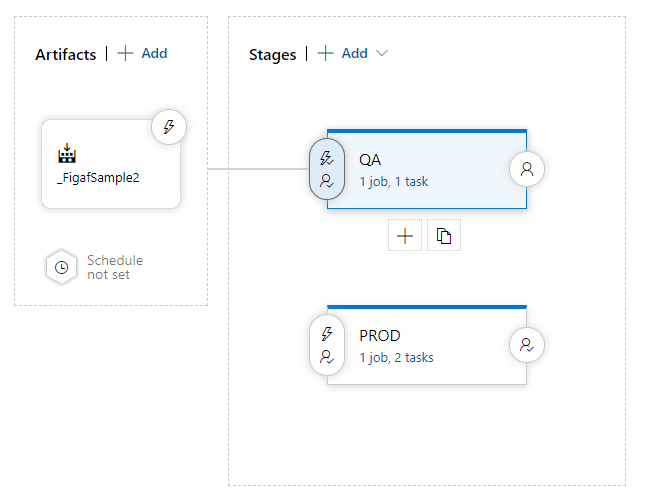

Next logical step is to deploy the needed IFlows to your QA/PROD environment. Azure DevOps provides quite powerful functionality of the Releases. It’s possible to configure a list of approvers for different stages, deployment triggers and plenty of other stuff.

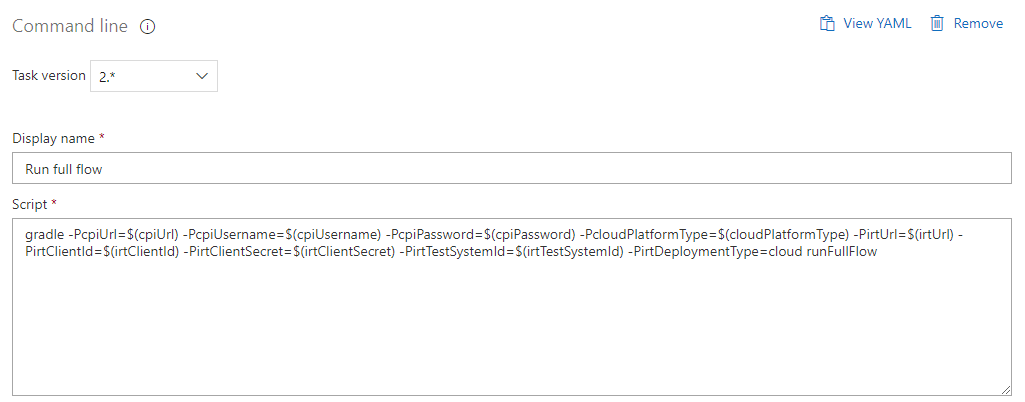

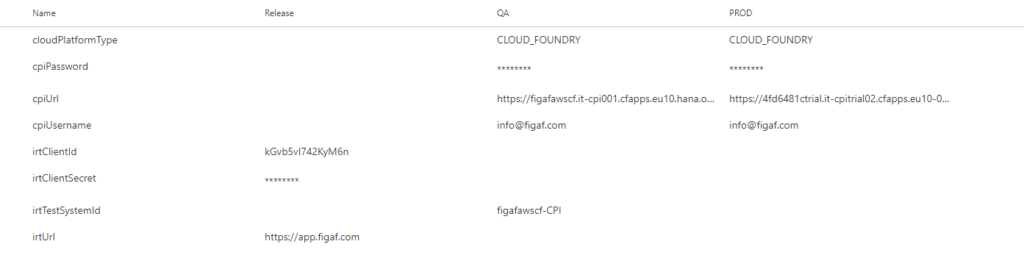

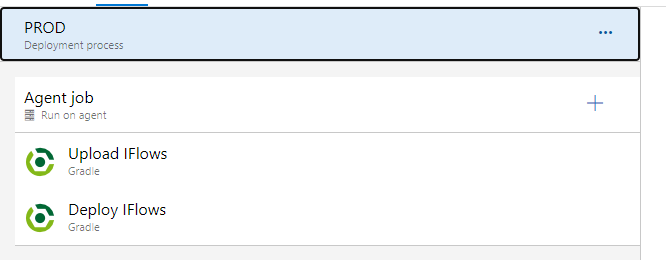

Assume that we want to upload, deploy and test IFlows on the QA server and only upload and deploy on the PROD. We have developed a couple of open source plugins which could really speed up your development. https://github.com/figaf.

So for the QA stage it’s possible to execute only one task called runFullFlow. This task will run unit tests for the IFlow, upload and deploy this IFlow and then run integration tests using the Figaf Tool.

The parameter values needed for the tasks are defined in Variables section:

When QA deployment finishes successfully you can move further for PROD deployment. Probably it’s enough just to upload and deploy the IFlows but not to do integration testing.

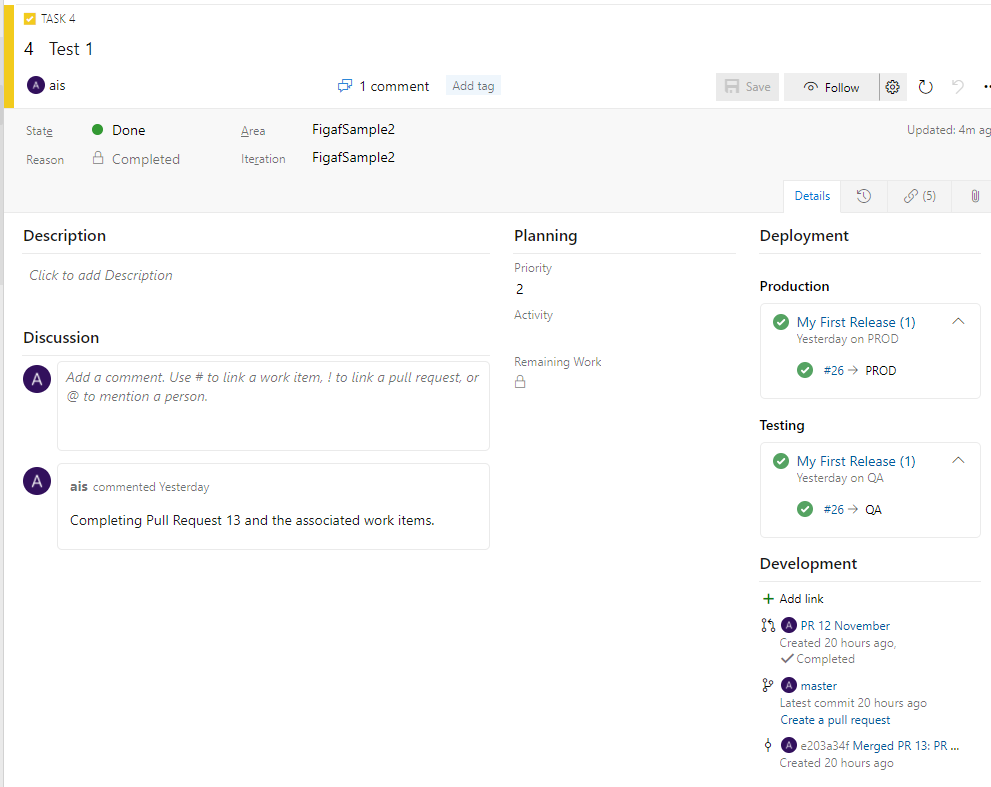

Boards

All your activity can be connected to a Work item which is basically a task or issue. You can see the related commits, pull requests, deployments, etc. They are connected to the Git changes so you can see which code is deployed via the changes.

Git Flow

The two places you need to edit your iflows add some complexity. You will need to:

- Update BPMN models in the WebUI.

- Update resources including Groovy in IDE.

You will need to synchronize between the two editors, here you can use our Gradle plugins for the process. Once the developers have perfected the iflow they should create a pull request to the master branch. Once this has been approved the build process.

One developer per iflow otherwise they risk overwriting each other changes. You can make copies of the iflows and make the modifications into them, but it would end up with a management challenge. The only way around it would be if users had a webUI and a runtime locally they could use to develop and run the iflow.

Sure one developer could make a change to one Groovy script and create a pull request for it, but it will still be a bit of a challenge to get a good workflow for it.

Challenges

This is a proof of concept of how the process would work. There is much more work that needs to be performed if this should be a productive ready solution.

To set this up you will need to spend some time to get it running and then configure it according to your requirements. There is a lot of code you need to figure out and ensure the process works as expected.

Configuration of iflows is not different in each platform with the external properties. You will need to find a way to add it to the process or perform the configuration manually.

Transport with virtual landscapes. One of the features we have in the Figaf Tool is the ability to reuse your development system as a test system.

Testing the iFlows. DevOps does require you to have a good testing setup that allows you to create tests and run them for each change, so you can ensure you are not breaking anything. In this PoC, we used the Figaf Tool to run the test. You can specify which test suite will be used for each iflow.

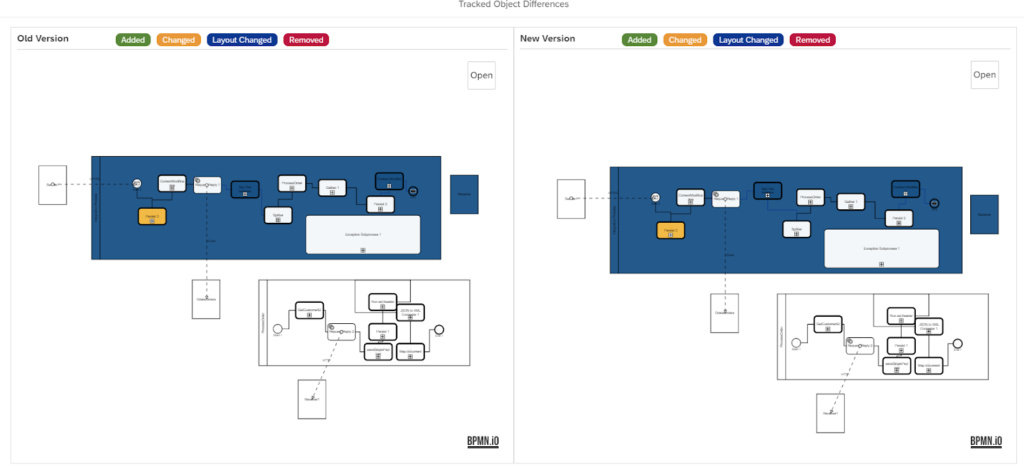

When you perform the approval you can pretty simply see Groovy or text changes, but since a big part of the changes is also changes in the iFlow BPM model it is not very readable so in the Figaf Tool, we have added a visual comparison of the differences. See the example

In the Figaf DevOps Tool we do provide some other ways of delivering the Integration. The tool uses the SAP CPI system as source repository, so it would technically not be correct to connect it ticket system with this flow.

Do you want our help to improve your flow just use the contact button.

Alternative

The alternative that we do recommend is to use the Figaf DevOps Tool. It is really simple to set up and does not require any coding to get to work. You can be up and running in 60 minutes directly on your laptop for a PoC. We do also have a cloud offering, where you also can test the setup.

And no matter what will it give you a simple way to initialize the Git repository.